In Office 365, audit is king (if you turn it on!). Nearly every action that you can take in the environment is recorded and stored for 90 days. For the compliance manager in your organisation, the new audit log search tool is going to make them a happy person!

But what if you want to be able to do something with the data after 90 days? Well basically you can’t. The data is gone and that’s that.

However…

You can take the data offline via the Microsoft Office 365 Management API. This API provides a number of ways to accumulate the data from your Office 365 tenant into an offline data store of some form. This can be done by registering a web hook that tells Office 365 what events you want to be notified about (Data is PUSHED to your solution), or you can request the data via a REST call to the API (You PULL the data that you want.)

Just recently, I had a client that had built an HR system in Office 365 for storing documents relating to the Employees in their organisation. Now this information is obviously very sensitive and controlling who has access to this data is obviously one of the strengths of SharePoint Online with its highly configurable permission model. However no system is perfect and as ever the admins ‘could’ give themselves or others permission to access content that they should not perhaps have. This is where auditing comes to the fore as data within the audit cannot be deleted or removed by the admins. Whilst the 90 days provided 3 months of audit log search capability, it didn’t protect the organisation for a long enough period of time (in this case the organisation wanted to keep the records for 7 years!)

In order to achieve this requirement, it was decided that we would extract the Audit records from Office 365, then process them to store only the audit records (Specifically the File Operations – Read, Edit, Upload, Download etc) that related to one of the eight libraries used to store employee data in the system.

Because it is not possible to request a pre-filtered audit log from Office 365, it was necessary to download the entire audit content for a single day, then process this offline to create a new single CSV file that contained all of the relevant log files for that particular day. This task is performed one day in arrears to allow time for all of the audit log aggregation to happen as some audited elements in Office 365 can take up to 24 hours to appear.

Once this filtered data was collated into a new CSV file, this file was then uploaded into a restricted library in the Office 365 site. The key reason for doing this is to enable the use of eDiscovery search in the future when trying to locate audit actions relating to a specific document.

Full disclosure here, the basics of accessing the Audit Blobs and then processing the downloaded manifests came from the OfficeDev GitHub repo. I used this to learn what was needed, then re-wrote the scripts from the ground up to achieve what I wanted.

The intended process…

- Scheduled timer job at 7am starts the extract process.

- Extract process checks when the last successful extraction was and then works out how many executions it needs to run before it reaches the required Offset of Today – 1 day.

- For the chosen day, the script connects to Office 365 and requests all Audit Blob manifests for the given time frame (Note: I was processing a day at a time. You could choose to break this down into smaller timeframes and run the script more often if you like.)

- Once all of the available Audit Blob manifests are downloaded, these are processed in turn to download the actual Audit Log Entries that the Audit Blob Manifest points to.

- As each Audit Log Entry collection is downloaded, the contents that match the URL requirements are added to an in-memory collection.

- Once all audit log entry collections have been processed, the filtered data is written out to a CSV file and uploaded to Office 365.

The Script…

I can’t re-produce the script in its entirety here, but what I will do is break down how we achieved the extraction process and how we processed the files for uploading into SharePoint with the appropriate code snippets. A much cut down version of the script is included at the end. It works and does what is needed but is NOT production ready by design! Use at your own risk.

The first thing we need to do is to create an App-Only permission that allows us to call into the Office 365 Graph using an unattended PowerShell script. If you’ve read my blog recently, I showed you how to do just that in http://www.myfatblog.co.uk/index.php/2017/02/powershell-access-to-the-graph-api-2/

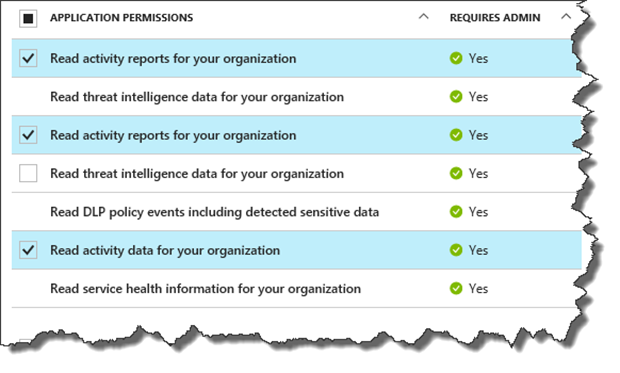

Follow that process, but instead of giving it the Microsoft Graph permissions that are shown in that article, choose the Microsoft Office 365 Management API and choose the following permissions:-

With that in place and the relevant Client ID, Client Secret and the Tenancy ID, we’re ready to start extracting the audit data.

Well actually not quite.. The first thing we need to do is create a subscription, and this can take up to 24 hours before it has any data available. This is because of the way that Microsoft stores your audit data across multiple servers, with your subscription serving to tell MS to aggregate the data for you into a subscription pool.

NOTE: This stored data is available for 7 days before it’s removed! If you attempt to retrieve it, you’ll get the following error:-

Invoke-WebRequest : {“error”:{“code”:”AF20051″,”message”:”Content requested with the key 20170526153048272006208$20170526153048272006208 audit_sharepoint$Audit_SharePoint has already expired. Content older than 7 days cannot be retrieved.”}}

To create a subscription, we just need to make a REST call to our Office 365 tenancy, and tell it what workloads we want to include in the subscription. In this case, I was only interested in SharePoint (And by association OneDrive) but you can also include Exchange and other aspects of O365.

The first thing we need to do is convert our App ID and Secret into an oAuth token that we can use to access the system. As I need to renew this token from time to time, I created a small function for this called get-oAuthAccesstoken.

Note: the following tokens are loaded into the script by a config file. You will need to replace these with your values.

$clientID, $clientSecret, $tenandomain.

function get-oAuthAccessToken()

{

# Retrieve Oauth 2 access token

$body = @{grant_type="client_credentials";resource=$resource;client_id=$clientID;client_secret=$clientSecret}

$oauthToken = Invoke-RestMethod -Method Post -Uri "https://login.windows.net/$tenantdomain/oauth2/token?api-version=1.0" -Body $body

return $oauthToken

}

With the token available, we create a HeaderParams object and then make the relevant REST call.

$oauth = get-oAuthAccessToken

$headerParams = @{'Authorization'="$($oauth.token_type) $($oauth.access_token)"}

Invoke-RestMethod -Method Post -Headers $headerParams -Uri "https://manage.office.com/api/v1.0/$tenantGUID/activity/feed/subscriptions/start?contentType=Audit.SharePoint"

Once this has returned a response, there’s nothing to do but wait. Some data will start appearing soon enough but I’d advise coming back in 24 hours to be certain of having data to play with.

Some time later…

With the subscription well established, we can now use a REST endpoint to ask Office 365 for a list of the available audit blobs from a specific time period. We do this by crafting a request that details the Start and End of the period and the ContentType that we’re interested in.

The full REST request URI looks like:-

Quite a mouthful, but the key entries in the querystring are:-

| contentType | Audit.SharePoint |

| startTime | 2017-05-01T00:00:00Z |

| endTime | 2017-05-01T00:00:00Z |

Note: The dates are formatted in TangoZulu time YYYY-MM-DD T HH:MM:SSZ (Without spaces)

With the URI created, we can make a call to it using the invoke-WebRequest cmdlet to get our first block of blob manifests. (Note: We use invoke-WebRequest rather than invoke-RestMethod because we want to view the headers from the response which you can’t do with invoke-RestMethod)

$rawBlob = Invoke-WebRequest -Headers $headerParams -Uri "https://manage.office.com/api/v1.0/$tenantGUID/activity/feed/subscriptions/content?contentType=Audit.SharePoint&startTime=2017-05-01T00:00:00Z&endTime=2017-05-01T23:59:59Z"

If you look at the $rawBlob object, we should have values for both Content and Headers. If we convert the content object to JSON, we can view the data that is returned. ($rawBlob.Content | convertfrom-JSON)

Each block of data contains:-

- ContentUri – The web address to retrieve that particular blob of data.

- ContentId – A unique ID for that blob.

- ContentType – Which particular audit type the data relates to.

- ContentCreated – When the content was added to the subscription.

- ContentExpiration – When the content will be removed from the subscription.

If we only have a small amount of data such as in my test system, then there may be only a single page of blobs. In reality this is extremely unlikely and you need to check for the presence of the NextPageUri header. This provides you with the URI to request the next page of data for this blob.

In order to work with this, we need to repeat the request until the header is gone, adding the response data to an in memory collection.

do

{

$rawBlob = Invoke-WebRequest -Headers $headerParams -Uri "https://manage.office.com/api/v1.0/$tenantGUID/activity/feed/subscriptions/content$auditSuffix"

write-host "Package length:" $rawBlob.Content.Length

foreach ($blobPackage in $rawBlob)

{

$blobs += $blobPackage.Content | ConvertFrom-Json

}

}

while ( $rawBlob.Headers.NextPageUri)

With the data from those requests consolidated into a single much larger blob manifest, we can now process each of those blob entries and recover the records that they hold.

foreach ($blobDataSource in $blobs)

{

#Note, you may need to keep an eye on your oAuth token expiry here!

#You can do this by comparing the UnixDate in $oAuth.expires_on

#And refreshing the token if there's less than 5 minutes to go.

$thisBlobdata = Invoke-WebRequest -Headers $headerParams -Uri $blobDataSource.contentUri

$blobDataJSON = $thisBlobdata | convertfrom-json

$totalRecordCount += $blobDataJSON.Count

$filteredBlobData = $null

$filteredBlobData = $blobDataJSON | ?{$_.ObjectId.tolower() -like $AuditSearchString}

foreach ($filteredBlob in $filteredBlobData)

{

$unstructuredAuditData += $filteredBlobData

$filteredCount++

}

}

Note the warning about the Access Token. For longer running scripts this may expire and you’ll want to refresh it if needed.

The code above, loops through the list of Blob data source URIs and retrieves each one in turn. It then processes the returned data and checks for any audit entries where the ObjectID (This is basically the URL for the Document or Page in SharePoint) is similar to our search path.

You can of course do much more complex analysis at this point, our requirement is simply for a path to a single site collection.

What we’re left with is an unstructured collection of audit records. I say unstructured, because it’s important to understand that Microsoft pull these records from multiple servers so the likelihood is that some of the records are out of order. You can fix this with a simple sort-object cmdlet.

$sortedData = $unstructuredAuditData | Sort-Object -Property CreationTime

And then finally, this object can be written out to a CSV file (Or any data store of your choosing!)

For our solution, it was decided that the CSV file would be uploaded into a SharePoint Online document library with a 7 year default retention policy on it. This ensured that the records were kept for no longer than required and also that they could be searched using the eDiscovery tool in the future.

Here’s the sample script, it works (You just need to add your values) but is NOT production ready!

Paul.

Footnote: Throttling

Occasionally, Office 365 may think you’re making to many requests in a given period. If this happens then you may receive a Web Exception during the invoke-WebRequest command. To avoid this, you’ll need to ensure that you implement a back off function around the invoke-WebRequest.

We achieved this by wrapping the invoke command in a do/while loop, with a success flag set to $false initially. Inside the Try block, the invoke-WebRequest is processed followed by the $SuccessFlag = $True. If the Try/Catch is triggered the flag is never set to $true so the invoke is repeated as needed.

In the catch block, we look for the Error code in the message to be set to AF429.

$errorCaught = $error[0].ErrorDetails

$errorJSON = $errorCaught.Message | convertfrom-json

if ($errorJSON.error.code -eq "AF429")

{

start-sleep -m 500

}

else

{

throw

}

Update on Throttling

One of my readers Emmet pointed out in comments that the “PublisherIdentifier” is now available to ensure that your app uses it’s own quota to ensure that your are only throttle by your own requests and not others using the tenant. This was added to the schema after I wrote this script, but is a nice improvement to ensure that these AF429 errors are of your own making and not someone elses!

Further details are in the API Referencer and also the Troubeshooting documents.

https://msdn.microsoft.com/en-us/office-365/office-365-management-activity-api-reference

https://msdn.microsoft.com/en-us/office-365/troubleshooting-the-office-365-management-activity-api

3 comments

Good post. On the throttling, if you provide the PublisherIdentifier in your API posts, you will get better throughput.

https://msdn.microsoft.com/en-us/office-365/office-365-management-activity-api-reference

Please advice on which tool we have to fire the command?

Ex: $clientID, $clientSecret, $tenandomain.

function get-oAuthAccessToken()

{

# Retrieve Oauth 2 access token

$body = @{grant_type=”client_credentials”;resource=$resource;client_id=$clientID;client_secret=$clientSecret}

$oauthToken = Invoke-RestMethod -Method Post -Uri “https://login.windows.net/$tenantdomain/oauth2/token?api-version=1.0” -Body $body

return $oauthToken

}

Author

This is a PowerShell script, running on a machine that also has the PNP cmdlets on it to allow the script to upload the extracted files into SharePoint. You don’t need to do this though, you could just as easily write the extracted data into a SQL data warehouse or Azure DB.